HP DL360 Gen9 Large Bar Memory(Tesla P10)¶

GPU卡请求内存映射I/O超过限制¶

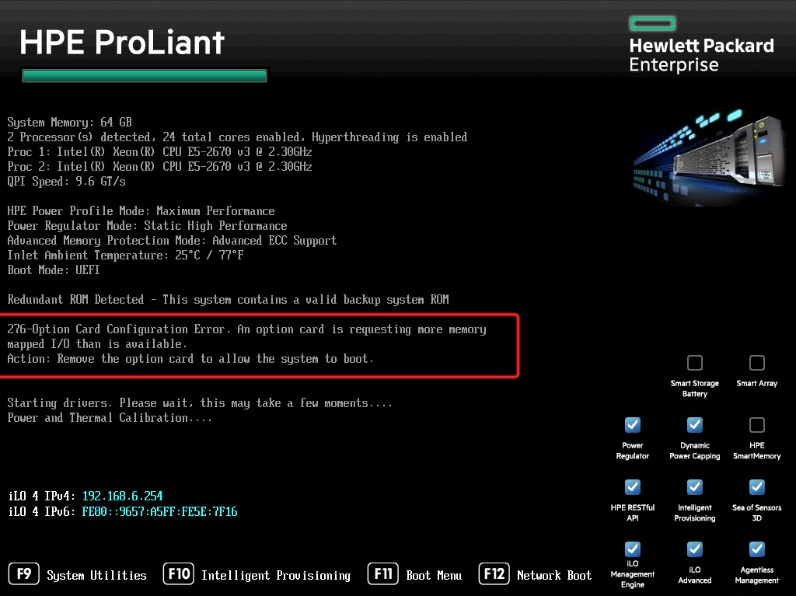

当我第一次在 HPE ProLiant DL360 Gen9服务器 上安装 Nvidia Tesla P10 GPU运算卡 ,启动时候BIOS自检会提示错误:

276 Option Card Configuration Error. An option card is requesting more memory

mapped I/O than is available.

Action: Remove the option card to allow the system to boot.

原因¶

NVIDIA的计算加速卡

备注

NVIDIA GPGPU Adapters system memory addressing limitations - IBM Systems 还指出了 NVIDIA Grid, NVIDIA Tesla, or NVIDIA Quadro 受到产品设计影响,内存地址限制导致不能用于内存大于 1TB 的系统。

VMware ESX配置建议¶

虚拟机系统为64-bit操作系统

物理机和虚拟机都使用EFI引导模式

若GPU 需要 16 GB 或更多的内存映射(BAR1 Memory),需要在物理机bios中启用GPU直通,设置项名称通常为:

Above 4G decoding

Memory mapped I/O above 4GB

PCI 64-bit resource handing above 4G

在虚拟机的

vmx文件配置中激活 64 位 Memory Mapped I/O (MMIO)pciPassthru.use64bitMMIO="TRUE"

Memory Mapped I/O (MMIO)大小调整:建议调整为(n*GPU显存)向上舍入到下一个2次幂:

两个16G显存GPU,2 x 16 GB = 32,将 32 GB 向上舍入到下一个 2 次幂,所需的内存量为 64 GB

三个16G显存GPU,3 x 16 GB = 48,将 48 GB 向上舍入到下一个 2 次幂,所需的内存量为 64 GB

或者直接设置为虚拟机分配的所有GPU显存大小的两倍,2*n*GPU显存(单位为GB)

设置举例:

pciPassthru.64bitMMIOSizeGB ="64"

虚拟机内存最小值建议为分配的所有GPU显存总大小的1.5倍

HP DL360 Gen9 BIOS设置 PCI Express 64-Bit BAR Support¶

虽然VMware文档提示:

Your host BIOS must be configured to support the large memory regions needed by these high-end PCI devices.

To enable this, find the host BIOS setting for “above 4G decoding” or “memory mapped I/O above 4GB” or “PCI 64 bit resource handing above 4G” and enable it.

The exact wording of this option varies by system vendor, though the option is often found in the PCI section of the BIOS menu.

Consult your system provider if necessary to enable this option.

但是我反复查看BIOS配置,都没有找到 PCI 配置部分

不过, enable large BAR support 有人也问了相似的查找 BIOS 配置支持 ‘64-bit IO’ ,提到了术语 Large BAR 。果然,在 HPE 文档中,使用了术语 Support 64-Bit Addressing 和 Large BAR 。根据 hpe dl360 gen9 enable large BAR support 搜索能够找到支持文档 Advisory: (Revision) HP ProLiant SL250s Gen8 and ProLiant SL270s Gen8 Servers - Servers Configured with a Large Number Of NVIDIA Tesla or Intel Xeon GPU Computing Modules Require the System ROM to Support 64-Bit Addressing (Large BAR) Support :

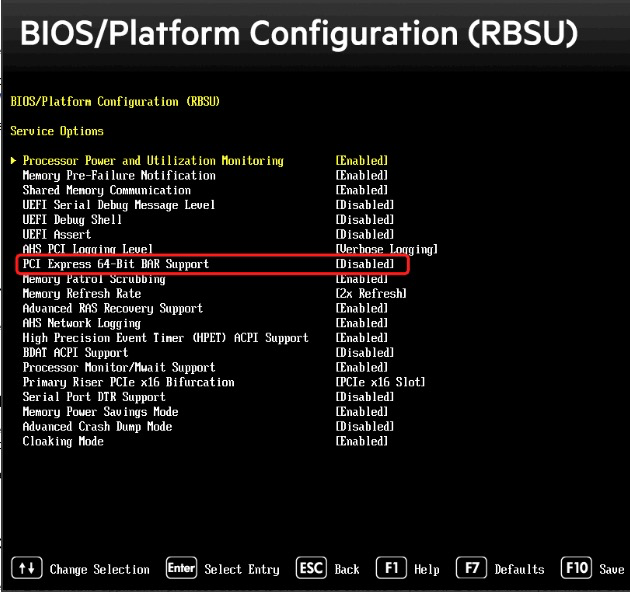

启动服务器,在BIOS提示时,按下

F9进入ROM-Based Setup Utility (RBSU)在RBSU中,按下

Ctrl + A,此时会进入一个Service Options– WOW,打开了一个新世界,原来很多选项都在这里

在

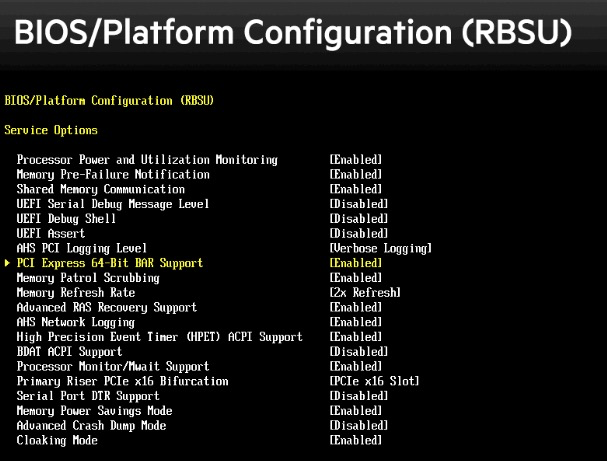

Service Options中,通过上下键移动菜单高亮,选择PCI Express 64-Bit BAR Support,默认这个选项是Disabled,按下回车键进入修改选项,将这个参数修改成Enabled

退出保存,然后重启服务器,此时

Large BAR就已经激活

备注

根据HPE文档,当 System Maintenance Switch 9 设置为 ON 的时候将始终激活 Large BAR 功能,所以如果要在 RBSU 中关闭 Large BAR 需要将 System Maintenance Switch 9 设置为 OFF 位置。

进入 Ubuntu Linux 操作系统(我在 私有云架构 采用Ubuntu作为物理主机操作系统 ),执行:

lspci -vvv

可以看到新增加的NVIDIA设备:

108:00.0 3D controller: NVIDIA Corporation Device 1b39 (rev a1)

2 Subsystem: NVIDIA Corporation Device 1217

3 Physical Slot: 1

4 Control: I/O- Mem+ BusMaster+ SpecCycle- MemWINV- VGASnoop- ParErr+ Stepping- SERR+ FastB2B- DisINTx+

5 Status: Cap+ 66MHz- UDF- FastB2B- ParErr- DEVSEL=fast >TAbort- <TAbort- <MAbort- >SERR- <PERR- INTx-

6 Latency: 0, Cache Line Size: 64 bytes

7 Interrupt: pin A routed to IRQ 75

8 NUMA node: 0

9 Region 0: Memory at 93000000 (32-bit, non-prefetchable) [size=16M]

10 Region 1: Memory at 39000000000 (64-bit, prefetchable) [size=32G]

11 Region 3: Memory at 39800000000 (64-bit, prefetchable) [size=32M]

12 Capabilities: [60] Power Management version 3

13 Flags: PMEClk- DSI- D1- D2- AuxCurrent=0mA PME(D0-,D1-,D2-,D3hot-,D3cold-)

14 Status: D0 NoSoftRst+ PME-Enable- DSel=0 DScale=0 PME-

15 Capabilities: [68] MSI: Enable+ Count=1/1 Maskable- 64bit+

16 Address: 00000000fee00718 Data: 0000

17 Capabilities: [78] Express (v2) Endpoint, MSI 00

18 DevCap: MaxPayload 256 bytes, PhantFunc 0, Latency L0s unlimited, L1 <64us

19 ExtTag+ AttnBtn- AttnInd- PwrInd- RBE+ FLReset- SlotPowerLimit 0.000W

20 DevCtl: CorrErr- NonFatalErr+ FatalErr+ UnsupReq-

21 RlxdOrd+ ExtTag+ PhantFunc- AuxPwr- NoSnoop+

22 MaxPayload 256 bytes, MaxReadReq 4096 bytes

23 DevSta: CorrErr+ NonFatalErr- FatalErr- UnsupReq+ AuxPwr- TransPend-

24 LnkCap: Port #0, Speed 8GT/s, Width x16, ASPM not supported

25 ClockPM+ Surprise- LLActRep- BwNot- ASPMOptComp+

26 LnkCtl: ASPM Disabled; RCB 64 bytes Disabled- CommClk+

27 ExtSynch- ClockPM- AutWidDis- BWInt- AutBWInt-

28 LnkSta: Speed 2.5GT/s (downgraded), Width x16 (ok)

29 TrErr- Train- SlotClk+ DLActive- BWMgmt- ABWMgmt-

30 DevCap2: Completion Timeout: Range AB, TimeoutDis+, NROPrPrP-, LTR+

31 10BitTagComp-, 10BitTagReq-, OBFF Via message, ExtFmt-, EETLPPrefix-

32 EmergencyPowerReduction Not Supported, EmergencyPowerReductionInit-

33 FRS-, TPHComp-, ExtTPHComp-

34 AtomicOpsCap: 32bit- 64bit- 128bitCAS-

35 DevCtl2: Completion Timeout: 50us to 50ms, TimeoutDis-, LTR-, OBFF Disabled

36 AtomicOpsCtl: ReqEn-

37 LnkCtl2: Target Link Speed: 8GT/s, EnterCompliance- SpeedDis-

38 Transmit Margin: Normal Operating Range, EnterModifiedCompliance- ComplianceSOS-

39 Compliance De-emphasis: -6dB

40 LnkSta2: Current De-emphasis Level: -6dB, EqualizationComplete+, EqualizationPhase1+

41 EqualizationPhase2+, EqualizationPhase3+, LinkEqualizationRequest-

42 Capabilities: [100 v1] Virtual Channel

43 Caps: LPEVC=0 RefClk=100ns PATEntryBits=1

44 Arb: Fixed- WRR32- WRR64- WRR128-

45 Ctrl: ArbSelect=Fixed

46 Status: InProgress-

47 VC0: Caps: PATOffset=00 MaxTimeSlots=1 RejSnoopTrans-

48 Arb: Fixed- WRR32- WRR64- WRR128- TWRR128- WRR256-

49 Ctrl: Enable+ ID=0 ArbSelect=Fixed TC/VC=ff

50 Status: NegoPending- InProgress-

51 Capabilities: [250 v1] Latency Tolerance Reporting

52 Max snoop latency: 0ns

53 Max no snoop latency: 0ns

54 Capabilities: [128 v1] Power Budgeting <?>

55 Capabilities: [420 v2] Advanced Error Reporting

56 UESta: DLP- SDES- TLP- FCP- CmpltTO- CmpltAbrt- UnxCmplt- RxOF- MalfTLP- ECRC- UnsupReq- ACSViol-

57 UEMsk: DLP- SDES- TLP- FCP- CmpltTO- CmpltAbrt- UnxCmplt- RxOF- MalfTLP- ECRC- UnsupReq- ACSViol-

58 UESvrt: DLP+ SDES+ TLP- FCP+ CmpltTO- CmpltAbrt- UnxCmplt- RxOF+ MalfTLP+ ECRC- UnsupReq- ACSViol-

59 CESta: RxErr- BadTLP- BadDLLP- Rollover- Timeout- AdvNonFatalErr-

60 CEMsk: RxErr- BadTLP- BadDLLP- Rollover- Timeout- AdvNonFatalErr+

61 AERCap: First Error Pointer: 00, ECRCGenCap- ECRCGenEn- ECRCChkCap- ECRCChkEn-

62 MultHdrRecCap- MultHdrRecEn- TLPPfxPres- HdrLogCap-

63 HeaderLog: 00000000 00000000 00000000 00000000

64 Capabilities: [600 v1] Vendor Specific Information: ID=0001 Rev=1 Len=024 <?>

65 Capabilities: [900 v1] Secondary PCI Express

66 LnkCtl3: LnkEquIntrruptEn-, PerformEqu-

67 LaneErrStat: 0

68 Kernel driver in use: nouveau

69 Kernel modules: nvidiafb, nouveau

备注

NVIDIA设备需要安装官方提供的私有驱动,默认Ubuntu软件仓库没有提供。 Linux view GPU information display? What kind of video card is this? 提供了常规安装显卡驱动的方法:

添加 Ubuntu 图形驱动 ppa: Proprietary GPU Drivers

sudo add-apt-repository ppa:graphics-drivers/ppa sudo apt-get update

安装驱动:

sudo apt install nvidia-driver-XXX

下载安装CUDA